Hot Search

An AI accelerator module is specialized hardware built to run AI workloads much faster and more efficiently than a standard CPU. Working as a co-processor alongside the main CPU, these modules—typically based on ASICs or dedicated AI chips—handle the heavy lifting of neural network computations, while the CPU manages system tasks. This combination delivers high performance, low latency, and power efficiency, which is especially critical for edge computing applications like industrial automation, robotics, and video analytics.

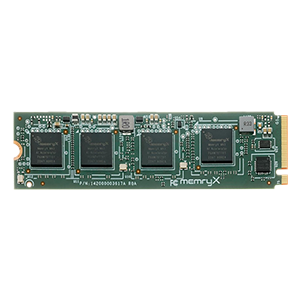

AI accelerator modules are designed to meet diverse deployment needs. The choice of form factor directly impacts integration complexity, performance scalability, and suitability for the target environment.